LaneSight

AI-powered lane perception for real-world driving scenes.

Overview

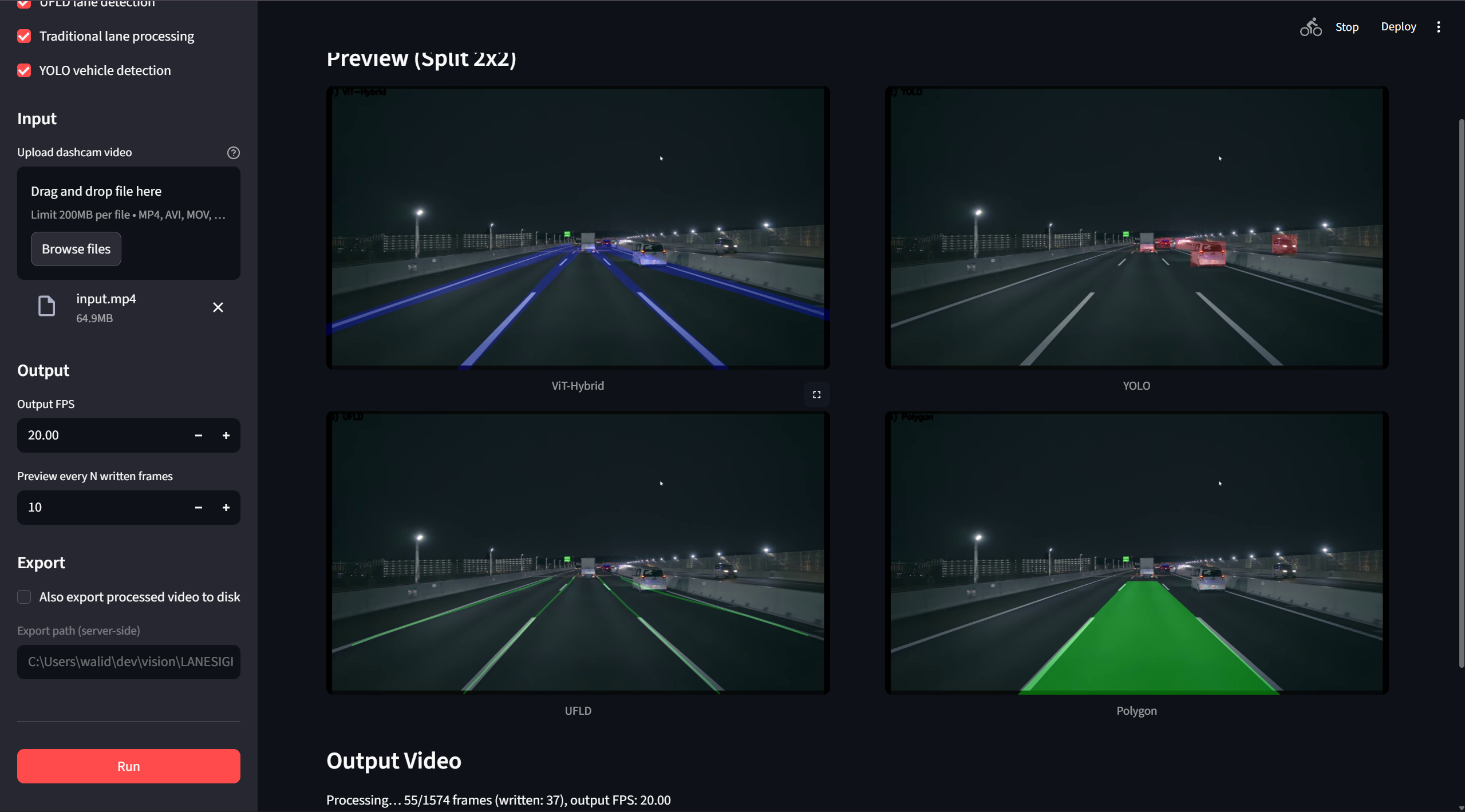

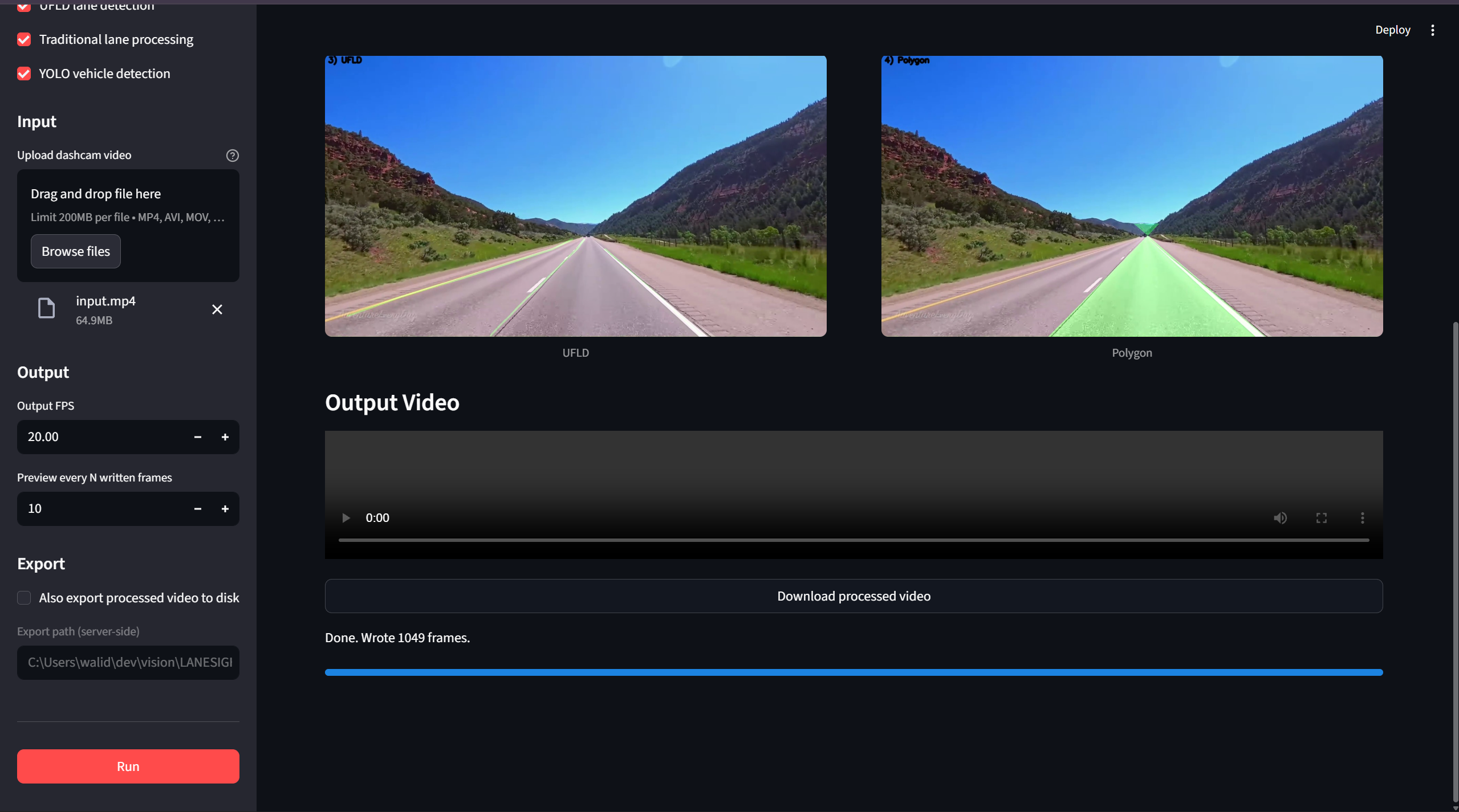

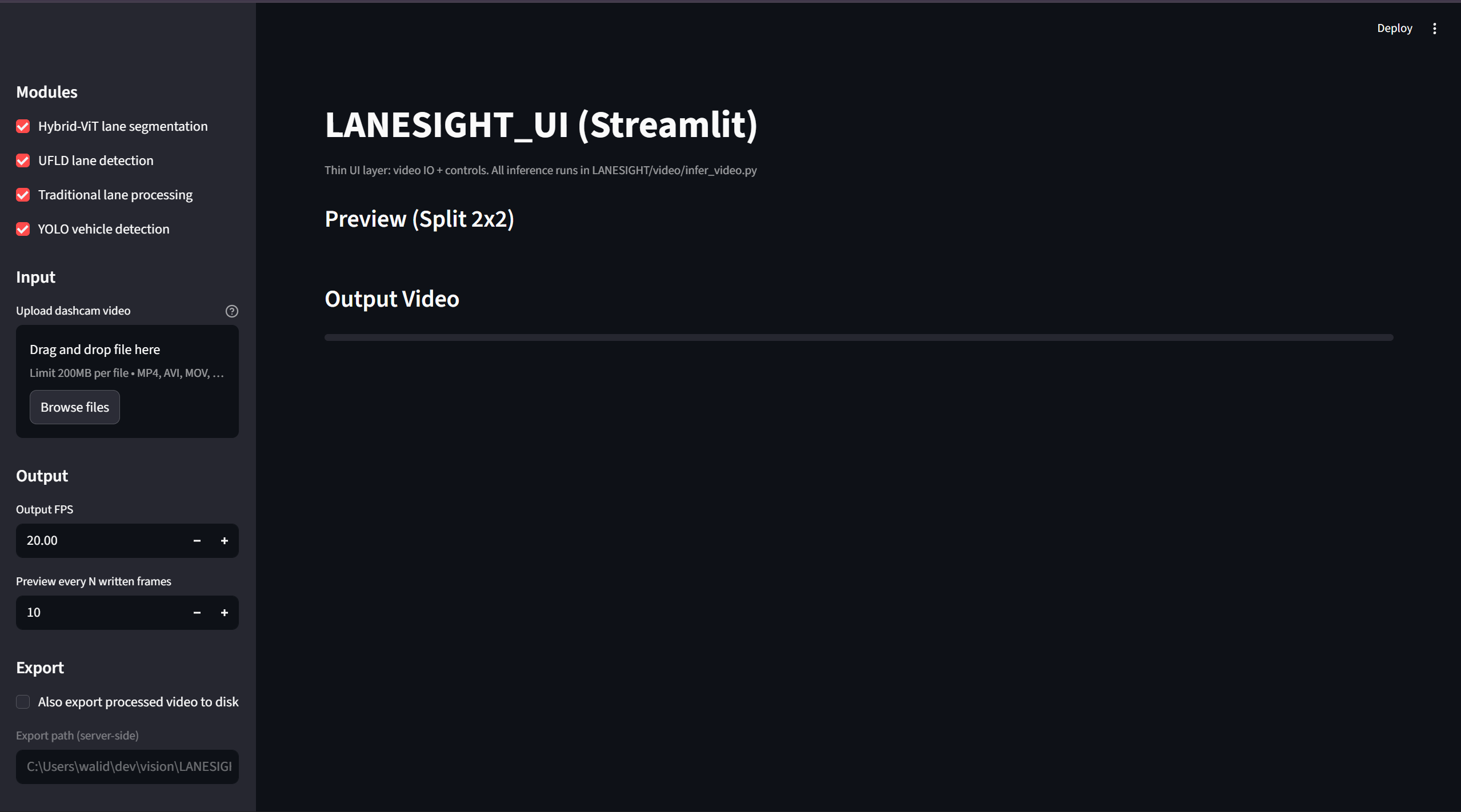

LaneSight is an end-to-end multi-paradigm lane perception system designed for real-world driving scenes.

It combines deep semantic understanding (Hybrid ViT-UNet) with geometric lane modeling (UFLD), classical vision priors, and object-aware filtering (YOLO) into a unified inference pipeline.

The core model leverages a CNN encoder with a Vision Transformer bottleneck to capture both local lane structures and long-range spatial dependencies. It is trained using class-balanced Cross-Entropy and Dice loss to handle extreme foreground/background imbalance.

At inference time, lane hypotheses are validated, filtered, and fused using semantic confidence maps and vehicle awareness, producing robust lane masks even under occlusions, clutter, or non-ideal road geometries.

This project demonstrates full ownership of the computer vision stack: architecture design, loss engineering, dataset debugging, GPU-safe training, and multi-model fusion — bridging deep learning, geometry, and classical vision in a production-oriented setup.

At a glance

Demo

Preview video. (Muted looping hero + playable demo.)

Gallery

Screenshots, flows, and key moments.

Tech

Core ML

10 itemsGeometry

5 itemsPerception Fusion

5 itemsVision

11 itemsDetection

3 itemsInference

4 itemsExperimentation

8 itemsScientific Stack

5 itemsInfra

6 itemsDetails

Project info

Next steps

- • Enhance processing speed for real time use

- • Implement on embedded electronic system